I am a design engineer with a background in interactive and experiential design, film, and installation artwork. I have a strong belief in the emancipatory potential of design and new technologies to help make the world a more equitable and sustainable place to live, be it on a micro or macro scale.

I am fascinated by systems, and the convergence of and interactions between human, organic, synthetic, and technological systems. Much of my work exists at the intersections of art, design, science, and technology, and my work at the RCA explores technoethics, social innovation, artificial intelligence, robotics, science communication, and biodesign.

Exhibitions

Elemental, National Science Museum of Thailand, TBC 2025

Tidal Light, National Science Museum of Thailand, TBC 2023

Float, The 1851der tent, Great Exhibition Road Festival, London, 2022

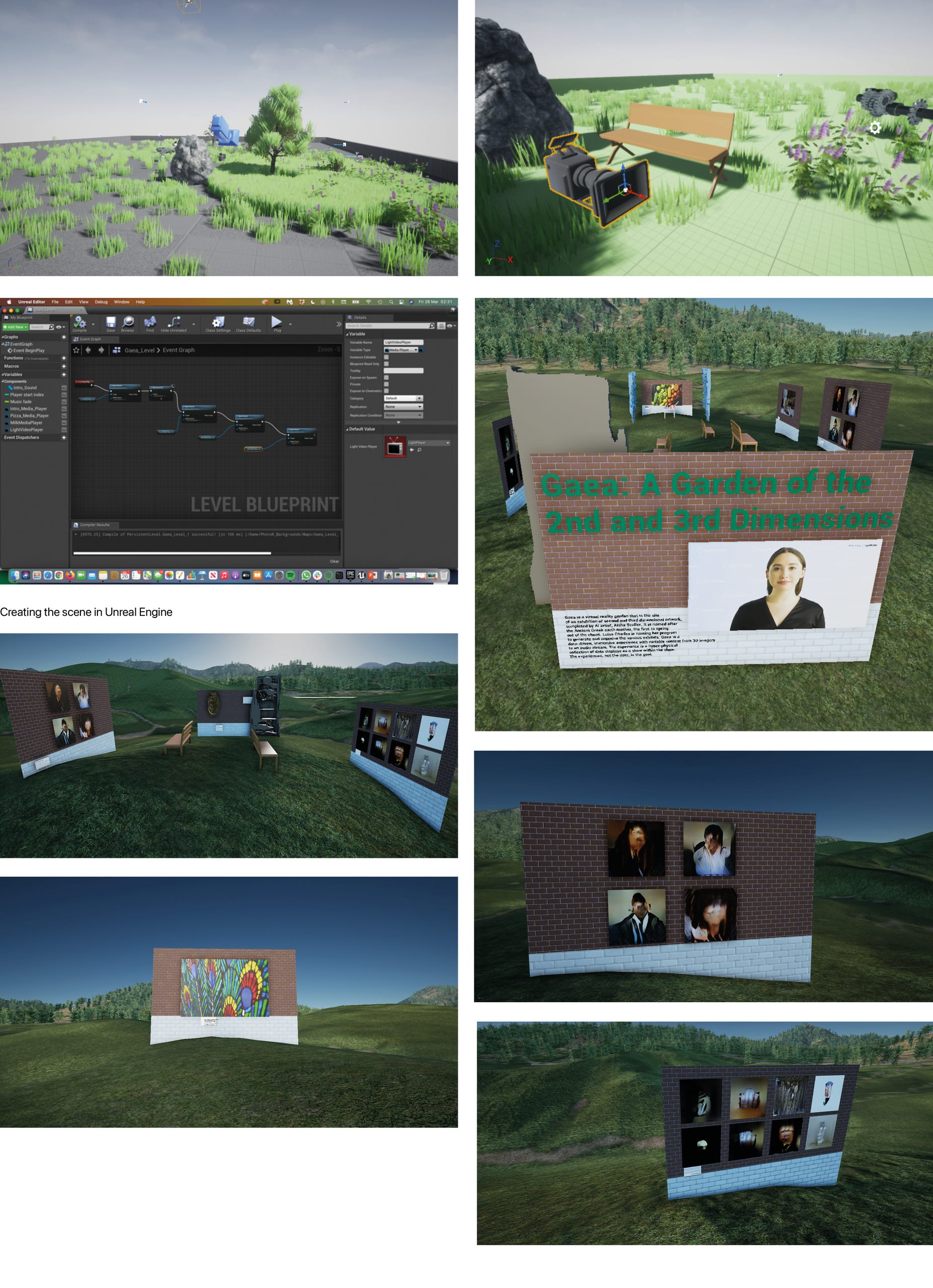

Curative, Gaea: A Garden of the 2nd and 3rd Dimensions, MIKA, New York, 2021

Tamagukiyo, Together in Europe: Creative Communities for Change, London Design Festival 2021

Linger on Matter, MODA-FAD, Disseny Hub, Barcelona, 2021

The Data Box, National Science Museum of Thailand, 2021

Scicomm Cubes, National Science Museum of Thailand, 2021

Sense of Direction, Dark Matter, Science Gallery, London, 2019

Inorganisms, Everything Happens So Much, London Design Festival 2018

Taste Sensations, Lates: Food and Drink, Science Museum, London, 2018

Kardia, Blood: Life Uncut, Science Gallery, London, 2017

Work

Special Effects, Dr. Strange and the Multiverse of Madness, Marvel Studios, 2020-2021

Props, Jurassic World Dominion, Universal Pictures, 2020

Project Manager, London Design Festival, University of the Arts London, 2019

Project Co-ordinator, Where Walworth Eats, University of the Arts London, 2019

Action Vehicles, Star Wars Episode IX, Lucasfilm Ltd., 2018-2019

Education

MA + MSc Global Innovation Design, Royal College of Art + Imperial College London, 2022 - Merit

BA (Hons) Interaction Design Arts, University of the Arts London, 2018 - First

Foundation Diploma in Art and Design, University of the Creative Arts, 2015 - Distinction